Developers have created an AI model based on texts up to 1931.

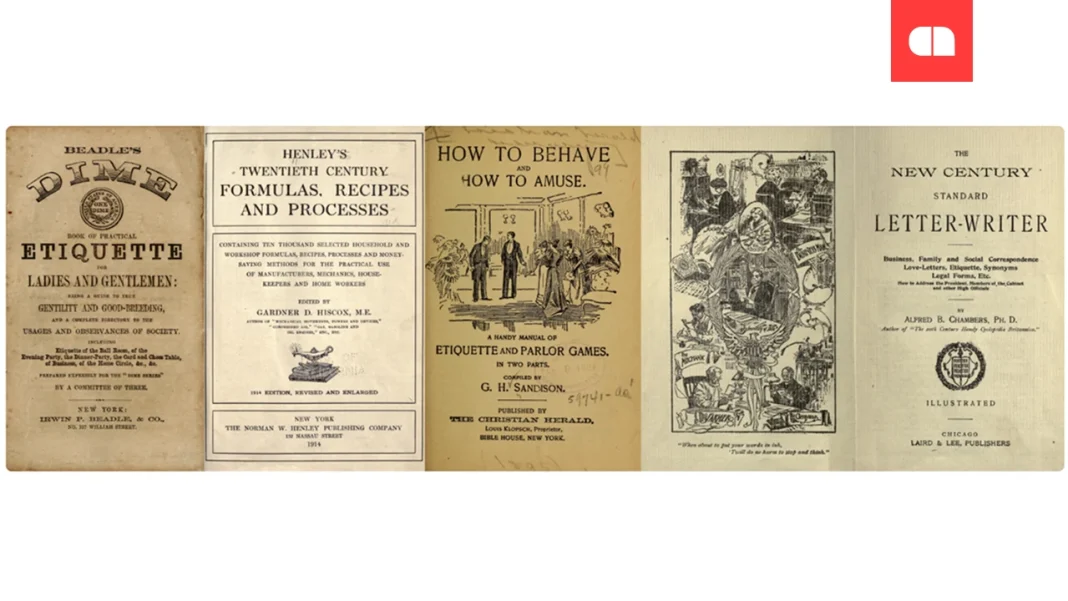

A group of developers led by former OpenAI employee Alec Radford presented the language model Talkie-1930-13B, which operates based on textual data collected up to 1931. The model is founded on approximately 260 billion tokens of English texts, including books, newspapers, scientific journals, patents, and legal documents.

This experimental initiative allows researchers to assess how artificial intelligence operates with “vintage” knowledge that excludes modern information. For instance, Talkie-1930 lacks information about World War II and modern technologies, although leaks of newer data are sometimes possible.

Despite limitations, the model understands language well and operates with basic logic and mathematics. As it turns out, even without programming knowledge, it is capable of writing simple code when provided examples. This opens up the possibility of testing AI’s ability to generalize knowledge and make assumptions about the future.

One of the main objectives has been to determine whether an “old-time” AI model can independently generate grand ideas, such as the theory of relativity. However, the quality of data digitized from old sources and the penetration of modern knowledge into the dataset remain challenges.

Currently, the team is focused on scaling the model to match the level of early versions of ChatGPT, as well as expanding the text corpus with other languages.

| Model | Tokens | Features | Challenges |

| Talkie-1930-13B | 260 billion | Language understanding, logic, basic mathematics | Data quality, time leaks |